Delivering a scalable, cost effective and dynamic modern data warehouse on Azure

The solution was architected for an established global brand operating across Gemstones, Jewellery, and Real Estate, aiming to deliver unified business decision dashboards and consolidated reporting across multiple ERP systems. Legacy reporting was slow, fragmented, and limited to select functional areas, preventing stakeholders from making timely, data driven decisions. To address this, we implemented an analytics first, AI ready data platform transforming three complex ERP ecosystems into a single, scalable enterprise data foundation and enabling truly organization wide intelligence and faster strategic decision making.

Solution delivers:

- Reliable, high quality insights while maintaining strict control over cloud costs.

- Unified dashboards and enterprise reports built on an integrated data model consolidating all critical data points.

- Scalable self service BI capabilities enabled through governed and reusable semantic data models.

Delivery Journey and Collaboration

The solution was delivered through a structured, collaborative, and outcome driven engagement model that ensured alignment across business and IT teams from day one and maintained transparency, governance, and execution discipline throughout the lifecycle.

- Structured discovery and alignment: In person discussions, questionnaires, discovery workshops, and deep dive working sessions to understand scope, data complexity, reporting expectations, and ERP dependencies.

- Business first prioritization: Identification of high impact use cases, KPI definitions, reporting rationalization, and phased delivery aligned to business value realization.

- Technology Evaluation and Platform Selection: Various Architecture Comparisons on basis of performance and cost, demonstrations for Tableau/Power BI/Qliksense dashboards to help stake holder in decision on cloud, tool and visualization tool selections.

- Design validation and buy in: Finally Architecture walkthroughs, stakeholder presentations, data model reviews, and proof of concept and analytics scenarios.

- Agile delivery with governance: Sprint based execution, backlog prioritization, regular demos, and steering committee reviews to maintain delivery momentum and executive visibility.

- Data engineering excellence: Standardized ingestion frameworks, incremental processing strategies, automated orchestration, dependency driven job execution, and parallel pipeline enablement.

- Quality assurance and validation: Continuous data reconciliation with source ERPs, automated validation checks and UAT cycles.

- Operational readiness: Logging and monitoring, failure logging with audit trails, performance optimization, cost monitoring, and production readiness reviews.

- Change management and adoption: User training sessions, documentation, business enablement workshops, Technical video tutorials for handover and guided onboarding for self-service BI adoption.

- Post go live support and optimization: Hypercare support, performance tuning, feedback driven enhancements.

This delivery approach ensured strong alignment between business objectives and technical implementation while enabling predictable execution, stakeholder confidence, and long term platform sustainability.

Design Philosophy

From the start, we anchored the solution on a few non-negotiable architectural principles:

- Decouple storage, compute, and consumption

- Design for change, not just for today’s schema

- Pay only for what you use

- Automate decisions through configuration, not code

- Treat business logic as a first class architectural concern

These principles directly shaped the final architecture and implementation choices.

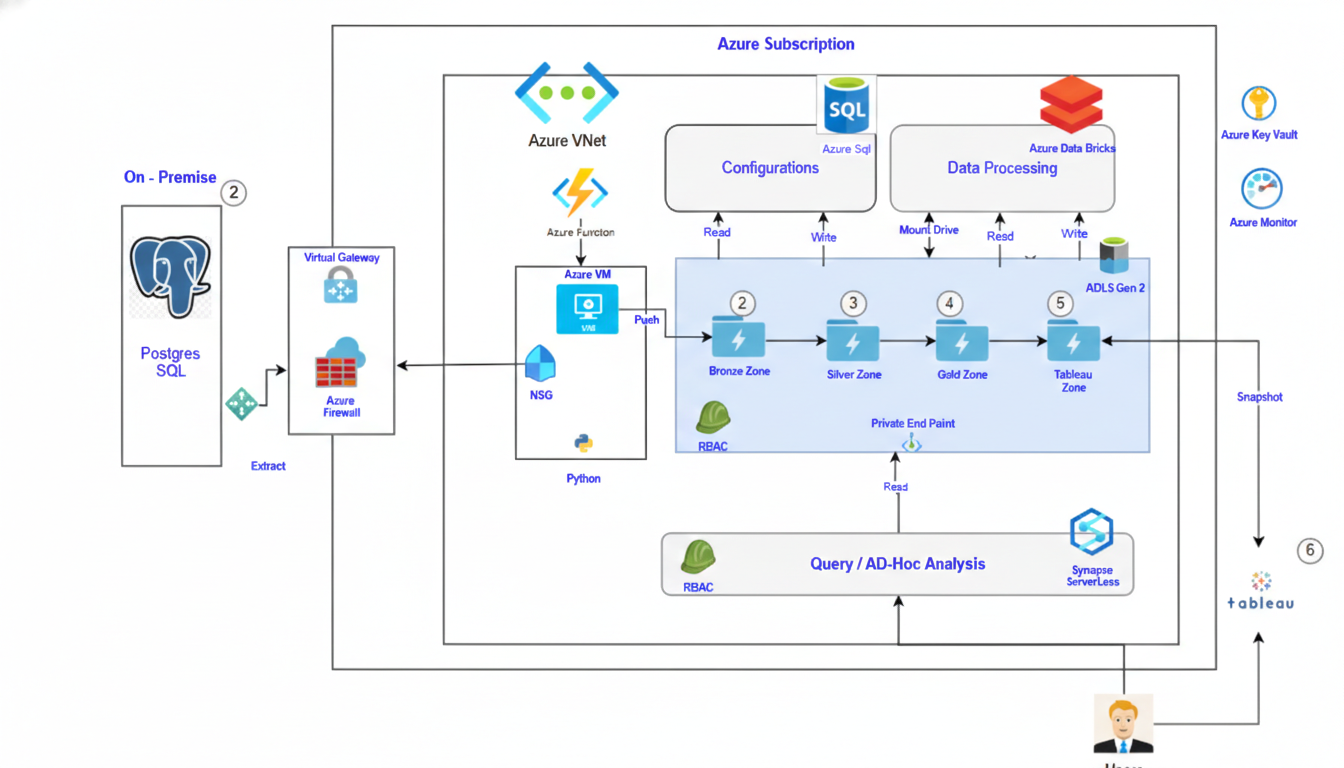

High Level Architecture Overview

The solution follows a Lakehouse based virtual data warehouse pattern built entirely on Azure:

- Azure Data Lake Storage Gen2 (ADLS) as the system of record

- Bronze / Silver / Gold (Medallion) layers for progressive refinement

- Azure Databricks for scalable transformation and orchestration

- Azure Synapse Serverless SQL for analytics and ad hoc querying

- Tableau / BI tools consuming curated Parquet based datasets

This architecture allows us to scale compute independently, support multiple workloads, and avoid expensive always on infrastructure.

Medallion Architecture with Real World Incremental Logic

Bronze Layer – Raw Ingestion

- Raw extracts from ERP and on premises systems

- Minimal transformation

- Immutable historical storage

- Active / Inactive record

Silver Layer – Cleansed and Conformed

- Data quality checks and normalization

- Cross ERP schema alignment

- Incremental logic that handles:

- Late arriving data

- Historical corrections (up to 5 years old)

- Source systems that update records

Gold Layer – Business Ready Analytics

- Business rules, aggregations, and derived metrics

- Designed for reporting and ad hoc analysis

- Optimised Parquet datasets for BI tools and SQL engines

Handling Complex ERP Business Scenarios

This Solution was built to handle non trivial enterprise logic, not simplified demo cases.

Examples include:

- Dashboards

- Sales, Inventory, Production on top of various dimensions

- Sales normalization

- Normal sales, memo sales, approval based sales

- Net of returns and net of commission calculations

- Inventory processing

- Recalculation on every transactional change

- Inventory ageing recalculated on both fact and dimension changes

- Pricing and valuation

- Historical price tracking

- Gain/Loss calculation using price on date logic

- Commission distribution

- Rule driven allocation

- Proportional, sales based, and location based splits

- Dynamic repricing (RAPNET)

- Driven by configurable global attributes (shape, size, colour)

All this logic was implemented in a way that remains transparent, testable, and scalable.

Cost Effective Analytics with Serverless Consumption

A major architectural decision was to avoid traditional, always on data warehouses.Instead, we used:

- Synapse Serverless SQL over Parquet files

- External tables on the gold layer

- Pay per query analytics model

This approach:

- Eliminates idle compute cost

- Supports ad hoc analysis at scale

- Works seamlessly with Tableau and other BI tools

For stakeholders, this translates directly into predictable and controlled cloud spend.

Role Based Access Control (RBAC)

Designed a scalable, metadata driven RBAC framework for a modern data warehouse that supports thousands of users and highly granular business dimensions. Instead of hard coding access, the business controls who can see what data across dimensions like profit centre, department, company, and location without operational dependency on IT.

- Massively scalable design: Handles millions of potential users–data combinations without performance or maintenance overhead.

- Business managed access: Users can activate or deactivate access through a simple form, with orchestration handled automatically.

- End to end enforcement: Access is governed at the DWH layer and consistently propagated through to Tableau, ensuring secure and compliant analytics.

- Architectural Scalability: Our design eliminates the “n+1” configuration nightmare, maintaining high performance even as user dimension combinations scale into the millions

Intelligent Orchestration and Automation

We implemented a metadata driven orchestration framework that provides fine grained control, resilience, and transparency across the data pipeline landscape. Pipelines are dynamically enabled or disabled based on configuration, dependencies, and source data availability eliminating manual intervention while maximizing throughput.

- Smart, dependency aware execution: Pipelines self monitor using a dependency matrix and source data checks, enabling safe parallel processing and automatic execution sequencing.

- Flexible incremental processing: Supports timestamp based incremental loads while allowing controlled reprocessing from any historical date when required.

- End to end observability: Every run is fully audited, capturing failures, record level insert/update counts, execution dates, and pipeline level lineage for complete operational visibility.

Dynamic and Configuration Driven Pipelines

One of the strongest differentiators of this solution is the dynamic pipeline framework.Instead of hardcoding ingestion and transformation logic, we implemented a configuration driven orchestration model, where pipeline behaviour up to silver layer is controlled by metadata tables.

This enables:

- Dynamic enable/disable of source scenarios

- Incremental processing without redeploying code

- Dependency aware execution across layers

- Faster onboarding of new data sources

Key benefits:

- Reduced operational overhead

- Lower cost due to fewer unnecessary runs

- Easier maintenance and extensibility

Security, Governance, and Enterprise Readiness

Security and governance were embedded into the design, not added later:

- Role Based Access Control (RBAC) across Tableau layers

- Tableau site access secured through IP based access restrictions to ensure controlled and authorized connectivity

- AD based Authentication and Authorization on Storage

- Private endpoints and Venet integration

- Network Security Groups and firewall rules

- Centralized secrets management via Key Vault

- Monitoring and alerting using Azure Monitor and Defender for Cloud

This ensures the solution is enterprise grade and audit ready.

AI Accelerated Delivery

To meet aggressive timelines and cost constraints, we have used AI across the delivery as an accelerator. This approach significantly reduced development effort while improving consistency and quality across a highly complex data landscape.

- Dynamic Pipeline Development Acceleration:

Once our approach was clear and meta data structure in place , we have used AI to design code for different part of pipeline.

- Automated SQL & Transformation Logic:

AI was leveraged to draft, optimize, and standardize complex SQL scripts across fact and dimension layers, ensuring performance, reusability, and alignment with business logic.

- AI Enabled Data Validation & Reconciliation:

Leveraged Agentic AI to compare and validate different dataset quickly, you just need to prompt and provide structure to AI , it will write a compare and validation code for you.

- Faster Time to Value:

By compressing build and test cycles, the client gained faster access to reliable insights across inventory, revenue, and profitability without compromising governance or scalability.

Why This Architecture Works

This solution succeeds because it balances engineering rigor with business pragmatism.

In summary, it delivers:

- Scalability without architectural complexity

- Cost efficiency without sacrificing performance

- Dynamic pipelines that adapt to change

- Strong governance without slowing teams down

- A future ready foundation for AI and advanced analytics

Final Thoughts

The modern data warehouse and Tableau AI–powered self service BI platform now provide a single source of truth across inventory, revenue, and profitability drilled across geographies, product hierarchies, and business lines. Most importantly, the solution balances cost efficiency with future readiness, empowering business users to make faster, data driven decisions while positioning the organization for continued global growth and analytics maturity.

Written By: Chinmay Jain